In my first semester at Georgia Tech, I worked on a semester group project for my UX research methods course, where I took on the role of Lead Researcher. The projects in this course are done in collaboration with an external partner. As a group, we were assigned to partner with Georgia Tech’s College of Computing to help them in their efforts to completely overhaul their website. While the College of Computing has the resources to implement their website programmatically, they had no research on the needs and behaviors of the student body, prospective students, faculty, or industry partners in relation to the website. Through our collaboration, we aimed to provide them with both in-depth research as well as design ideas for them to implement.

Target Users

For this project, the College of Computing team asked us to focus on students – both current and prospective. Georgia Tech’s College of Computing has more than 12,000 enrolled students, with almost an equal number of applicants every year. Through this project, we had the chance to make a positive impact at a very large scale, and the College of Computing is committed to implementing our findings in their website redesign efforts.

Four Areas of Focus

In our initial conversation with the College of Computing, we heard about what they felt were the biggest issues with the website. We then validated and sized these concerns with further research, as explained below.

Phase I: Research

Phase Objectives

- Validation: are the 4 areas of focus the real issues we need to address in this project? Is there another issue that requires more attention?

- Sizing: how major are the concerns within each area? Which areas should we prioritize?

Key Takeaways

- Navigation was the #1 problem on the website

- Content was the #2 problem on the website

- Tenure at GeorgiaTech, degree program, and mobile device modulate what pages students access on the website

- The current website design is non-responsive and very inaccessible

In this project, we employed several different methods to learn not just about our target users, but also the current website. When we were first assigned the project, I designed a 4-month research plan that aligned with our class deadlines and intermediate deliverables for the College of Computing team. As the Lead Researcher of the project, I delegated some of the lighter research activities, and took the lead on the survey and card sorting tasks.

I. Stakeholder Interviews

To first understand the exact demands of the College of Computing, we conducted stakeholder interviews with members of the administration and an expert on accessibility. These interviews were unstructured, and were led mostly by the participants, who offered their specific needs and issues with the current website. While our interviews with the College of Computing team and administration focused on poor navigation and content strategy, the conversation with the accessibility expert highlighted special tools and considerations to keep in mind when we moved into the design phase of our project.

“The website lacks clear navigation to useful resources for students. I would like to have a single place that I can point students to.”

“Truly accessible websites require usability and programmatic testing”

We moved on to several other research activities to study not just our original four themes, but also validate these opinions against the needs of the student body.

II. Survey

This survey was my personal baby in this project. I love designing surveys, especially in Qualtrics.

Through this survey, I aimed to probe into each of our 4 themes and collect data directly from the student body. A member of the College of Computing’s Communications team helped me send out the survey to all the students in the college, and I collected 195 viable responses across degree programs and tenures at Georgia Tech. As a proxy for prospective students, 1st year students were directed to a different survey block, where they answered almost the same questions as other students, but in the context of their application process (i.e. “When you were applying to Georgia Tech, …”).

Here are the probes and key takeways, categorized by the 4 major themes of this project:

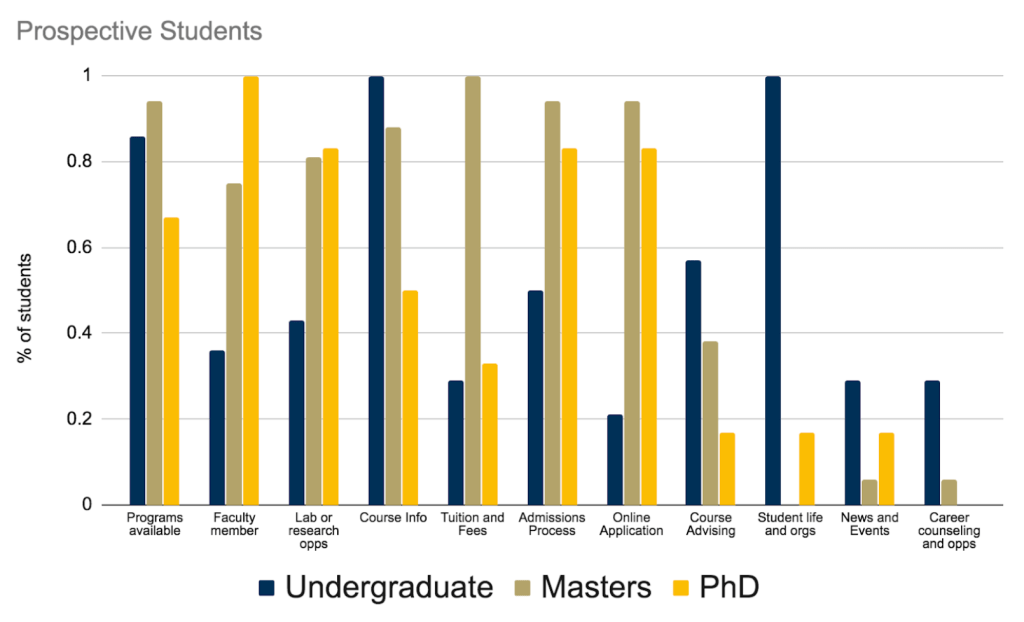

Because the survey began with demographics questions on the participant’s tenure and degree program, I was able to segment the subsequent data by specific user groups. For example, we were able to identify specific topics of interest on the website along degree and current vs. prospective lines

These findings were valuable because they helped us identify the specific user segments that certain topics or pages should be designed for. While the website is a resource for all current and prospective students, the distribution of interest indicates that content on the site can be developed with specific audiences in mind.

Through the survey, I was able to also identify and rank problems with the website, according to students.

This made navigation and content our top priority for the rest of the project. While concerns were mostly shared between current and prospective students, a higher number of prospective students indicated the information on the website did not answer their questions. Furthermore, current students were aware of what information could (not) be found on the website. This supported a point that an academic advisor made: current students and the administration are familiar with the website and its limitations. The website’s navigation and content is more problematic to new or prospective students.

The best part of this survey was that many of our findings were corroborated by our other research methodologies. These survey results mirrored the issues, opinions, and behaviors we surfaced across our research activities, giving us confidence and focus in our next steps.

III. Competitive Analysis and Heuristic Evaluation

While exploring the website on our own, we realized that much of the content, information architecture, and UI design was outdated. We decided to compare the website to similar institution sites, to pinpoint and prioritize specific areas of improvement for each of our four themes: navigation, design, content, and accessibility.

To evaluate each website, we pulled heuristics from several different sources commonly used to perform heuristic evaluations. These included:

- Abby IA by Abby Covert

- Nielsen’s Usability Heuristics

- Schneiderman’s 8 Golden Rules

- W3C Accessibility Guidelines

The competitive analysis surfaced specific issues to address in our own redesign of the College of Computing website. These included:

- The website is not responsive on mobile

- Navigation across site pages is unintuitive and messy due to an unclear site structure

- The website holds too much content that needs to be trimmed and placed in the appropriate location

- The website lacks clear style and branding guidelines

IV. Website Analytics

We next looked at the website analytics, which were provided to us by the College of Computing. The analytics provided a more quantitative grasp on the traffic, behavior, and areas of interest of website visitors. While the analytics provided rich (and fun!) data to comb through, we chose to focus primarily on navigation and content. We first segmented site visitors and identified what areas of the website each group explored. This was to capture some of the differences between our target user groups: current and prospective students.

We also saw that site visitors would usually use Google to find the specific topic they were interested in, and then go to that page on the website. In comparison, very few visitors use the website’s internal search engine or clicking through the website to find information. As part of our suggestions to the College of Computing’s development team, we will stress search engine optimization so that when the website is queried, it can quickly return the appropriate site page.

Finally, when exploring the types of pages visited from various devices and platforms, we saw that topics accessed through mobile tended to be ‘quick’. These pages did not usually hold large amounts of content or include Calls to Action (CTAs). In contrast, pages visited on desktop were content heavy, and led to several other pages or forms such as the admissions application. This was a trend we also saw in our survey results, where students self reported topics of interest and categorized them by device.

V. Hybrid Card Sorting Task

As we moved away from the more exploratory research phase, we wanted a baseline to create a new information architecture (IA). From our other research, we saw that navigation was the biggest issue with the current website, and chose to make that our primary focus for the rest of the semester. To build a new IA, we first wanted to understand how students would categorize and organize the website’s high-level information through a card sorting task. This was the second research activity that I took the lead on organizing and conducting.

For this card sort, I pulled the categories from tabs on the current website’s global navigation. For the cards, I used the dropdown items for each tab. I met the participants in person, and for each participant, I randomly organized the categories across a table. I then gave the participant a shuffled deck of the cards, asking them to categorize the cards as they saw fit. Participants also had the option to add or remove any cards or categories, making this a hybrid card sort, as opposed to a purely open or closed one. While sorting the cards, I asked participants to think out loud and provide their reasoning for each categorization.

To analyze the data, I worked with other team members to create a table of the categories that emerged, based on the frequency of cards placed in them. Some categories from the current website were completely removed, while others were added in.

One key change that emerged in the card sorting was that students wanted to see a distinction made between resources for prospective and current students. Taking this and the rest of the results of the card sorting into account, we set out to create a new IA for the global navigation in the next phase of our project.

Phase II: Information Architecture and Components Based Design

Phase Objectives

- Create a high-level information architecture and content strategy informed by students’ mental models

- Identify the building blocks (components) of site pages to create a consistent design and content vocabulary, and templatize the website development process

- Design 2 interactive tabs on the website to validate the new IA and components against users

Outcomes

- A new, high-level sitemap of global and sub-navigational elements

- Components for select pages within 2 tabs in the new sitemap

- Interactive prototype of linked pages used in user testing

In this phase, we began designing and building a new website architecture in response to the concerns we had surfaced in the research phase. One key characteristic of the website that we had to consider was its sheer amount of content. Given the limited time frame and resources, we could not possibly redesign the entire website. This led us to develop only the first two levels of the site map (the global navigation and its sub-elements), and then deep dive into two new tabs to test specific designs. Further, we wanted to create designs that could be used as templates for other pages, so that when we handed the project off to the College of Computing, they could easily adopt our work.

A New Sitemap

Using the findings of the card sorting task, we created a new global navigation, which included specific tabs devoted to resources for current students and for prospective students. These were the two tabs that we chose to focus on for the rest of the project, and were part of the interactive prototype we later developed.

Components Based Design

In light of the quantity of content on the website, we wanted to create a more streamlined design process that did not require each page to be designed separately. We took inspiration from Brad Frost’s Atomic Design to create components made of text, media, and interactive elements like buttons. The components were templates that could then be stacked together to create whole web pages across the website. As part of our deliverable for this project, we designed a components library that was suited for both desktop and mobile platforms.

A Solution for Accessibility

Because components based design is meant to streamline the design process, we saw this as a way to also systematically create a more accessible website. Many accessibility features, such as alternative text and ARIA tags, need to implemented programmatically. With components based design, we templatize the development process as well, so if single components were coded with accessibility features, the website as a whole would become more accessible.

Building Pages

To create pages for our prototype, we took two possible pages under the new ‘Current Students’ and ‘Prospective Students’ tabs, and designed some components with sample text. We then asked peers to organize the components on blank pages according to the order in which they would like to see that content. This allowed us to identify in-page content hierarchies and create pages for our prototype that were based on user feedback.

Phase III: Prototype Evaluation

Phase Objectives

- Design clickable prototypes of selected pages for desktop and mobile

- Test the prototype for navigation and design

- Repeat the same heuristic evaluation as Phase I to confirm improvements

Outcomes

- A medium-fidelity prototype with newly designed pages that were linked and clickable

- Improvements to website navigation and design for both desktop and mobile

- Qualitative feedback from experts about the new designs

In this phase, we used the new site map and components to create desktop and mobile prototypes with a new global navigation bar and specific pages within the ‘Current Students’ and ‘Prospective Students’ tabs. These pages were linked to each other and had clickable elements to allow for some navigation through the website. We then tested these prototypes with one expert-based and one user-based method.

Heuristic Evaluation by Experts

I asked four experts to evaluate the new designs based on the same heuristics we used in Phase I. This was to give us a quantitative grasp on whether the new designs had actually improved the website within the original four areas of focus.

For the heuristic evaluations, I gave my participants the links to desktop and mobile prototype, and asked them to find specific topics within the website. After exploring the prototypes, participants gave scores for how well each heuristic was satisfied, which I averaged and compared across the two evaluations.

By comparing the results of the two heuristic evaluations, we saw a distinct improvement in the information architecture and design categories. Because we had place holder content on the prototypes, our evaluators were not able to give valid or confident reviews. Furthermore, because the prototypes were not implemented programmatically, we could not include and test any accessibility features. I provided verbal detail on where we might include them, but the prototypes themselves did not include elements such as alternative text or screenreader compatibility.

Testing on UserTesting.com

Another team member decided to use UserTesting.com to perform an A/B test between the legacy and redesigned website. Participants were shown either the legacy or new website on desktop, or the two version on mobile (i.e. 4 conditions: legacy desktop, legacy mobile, redesigned desktop, redesigned mobile). Participants were asked to find specific topics on their versions of the website while being timed, and then asked questions about their experience. In the end, we had the results from 11 participants, which showed a general decrease in time on task and increase in ease of use for the redesigned website.

Final Recommendations

In our final presentation to the College of Computing, we provided specific recommendations for each area of focus based on our findings.

Information Architecture

- Continue developing the site map

- Have multiple paths to content, but have a consistent URL format to improve SEO

- Confirm subsections of the site map with users

Content

- Have a strategy for content creation and management; website content should be added in a concise and intentional manner

- Combine content strategy with user research by asking users to prioritize content as they would like to it

- Provide external content sources such as labs and on-campus centers with templates to maintain design and content consistency

- Link to external resources to minimize the number of broken links and upkeep efforts

Design

- Parallelize the design process between mobile and desktop platforms; the mobile website should not just be a smaller version of the desktop site.

- Use the components based design approach to minimize design effort while maintaining a consistent style

Accessibility

- Incorporate alternative text into the markup/code for all images

- All audio/visual content should have transcripts

- Color and highlights should not be the only ways to differentiate between content and types of text

- Text, images, and the screen background should have a contrast ration of at least 4.5 : 1

- The website’s interactive elements should be operable through only mouse or keyboard interaction

- Content should be ordered in a meaningful and hierarchical way to present more relevant information first and reduce time on task with screen readers